Monday review - Myers' dBG Paper, Pacbio's Multiplexing and Bioinformaticians' Foray into Escapism

1. Correcting Long Noisy Reads Using de Bruijn Graphs

Great news - the algorithmic concepts for short read assembly developed over the last decade need not be unlearned. In the two papers presented below, Myers, Pevzner and their colleagues use de Bruijn graphs for assembly and error correction of long noisy reads.

Non Hybrid Long Read Consensus Using Local De Bruijn Graph Assembly

While second generation sequencing led to a vast increase in sequenced data, the shorter reads which came with it made assembly a much harder task and for some regions impossible with only short read data. This changed again with the advent of third generation long read sequencers. The length of the long reads allows a much better resolution of repetitive regions, their high error rate however is a major challenge. Using the data successfully requires to remove most of the sequencing errors. The first hybrid correction methods used low noise second generation data to correct third generation data, but this approach has issues when it is unclear where to place the short reads due to repeats and also because second generation sequencers fail to sequence some regions which third generation sequencers work on. Later non hybrid methods appeared. ** We present a new method for non hybrid long read error correction based on De Bruijn graph assembly of short windows of long reads with subsequent combination of these correct windows to corrected long reads. ** Our experiments show that this method yields a better correction than other state of the art non hybrid correction approaches.

Assembly of Long Error-Prone Reads Using de Bruijn Graphs

The recent breakthroughs in assembling long error-prone reads (such as reads generated by Single Molecule Real Time technology) were based on the overlap-layout-consensus approach and did not utilize the strengths of the alternative de Bruijn graph approach to genome assembly. Moreover, these studies often assume that applications of the de Bruijn graph approach are limited to short and accurate reads and that the overlap-layout-consensus approach is the only practical paradigm for assembling long error-prone reads. Below we show how to generalize de Bruijn graphs to assemble long error-prone reads and describe the ABruijn assembler, which results in more accurate genome reconstructions than the existing state-of-the-art algorithms.

2. Pacbio Technology Turns More Awesome, While Nanopore Fails to Deliver

Pacbio recently released multiplexing technology that will reduce the cost of bactetial genome (5-10MB) sequencing to below $100/genome. “Mike the Mad Biologist” writes -

PacBio Finally Makes A Move It Desperately Needed To Make

So PacBio finally released a protocol that enables multiplex sequencing–the ability to sequence multiple bacterial genomes at one time:

This document describes a procedure for multiplexing 5 Mb microbial genomes up to 12-plex and 2 Mb genomes up to 16-plex, with complete genomes assemblies (<10 contigs). The workflow is compatible for both the PacBio RSII and Sequel Systems. 10kb SMRTbell libraries are constructed for each sample through shearing and Exo VII treatment before going through the DNA Damage Repair and End-Repair steps. After End-Repair, barcoded adapters are ligated to each sample. Following ligation, samples are pooled, treated with Exo III and VII, and then put through two 0.45X AMPure® PB bead purification steps. Note that size-selection using a BluePippin™ system is not required. SMRTLink v4.0 is utilized to demultiplex and assemble the genomes after sequencing….

For this procedure, the required total mass of DNA, after pooling, is 1 – 2 μg. Therefore, the required amount of sheared DNA, per microbe, going into Exo VII treatment is 1 μg divided by the number of microbes. For example, in a 12-plex library, 1 μg ÷ 12 microbes = 83 ng of sheared DNA is needed for Exo VII treatment.

Translated into English, you can sequence 12 E. coli at once, or 16 Camplyobacter. This makes the sequencing cost per bacterium very affordable. The quality of these genomes, if they’re similar to previous PacBio bacterial genomes, is quite high, both in terms of contiguity (figuring out where the genes are relative to each other) and sequence accuracy (the latter, as best as I can tell, is still a problem for Oxford Nanopore).

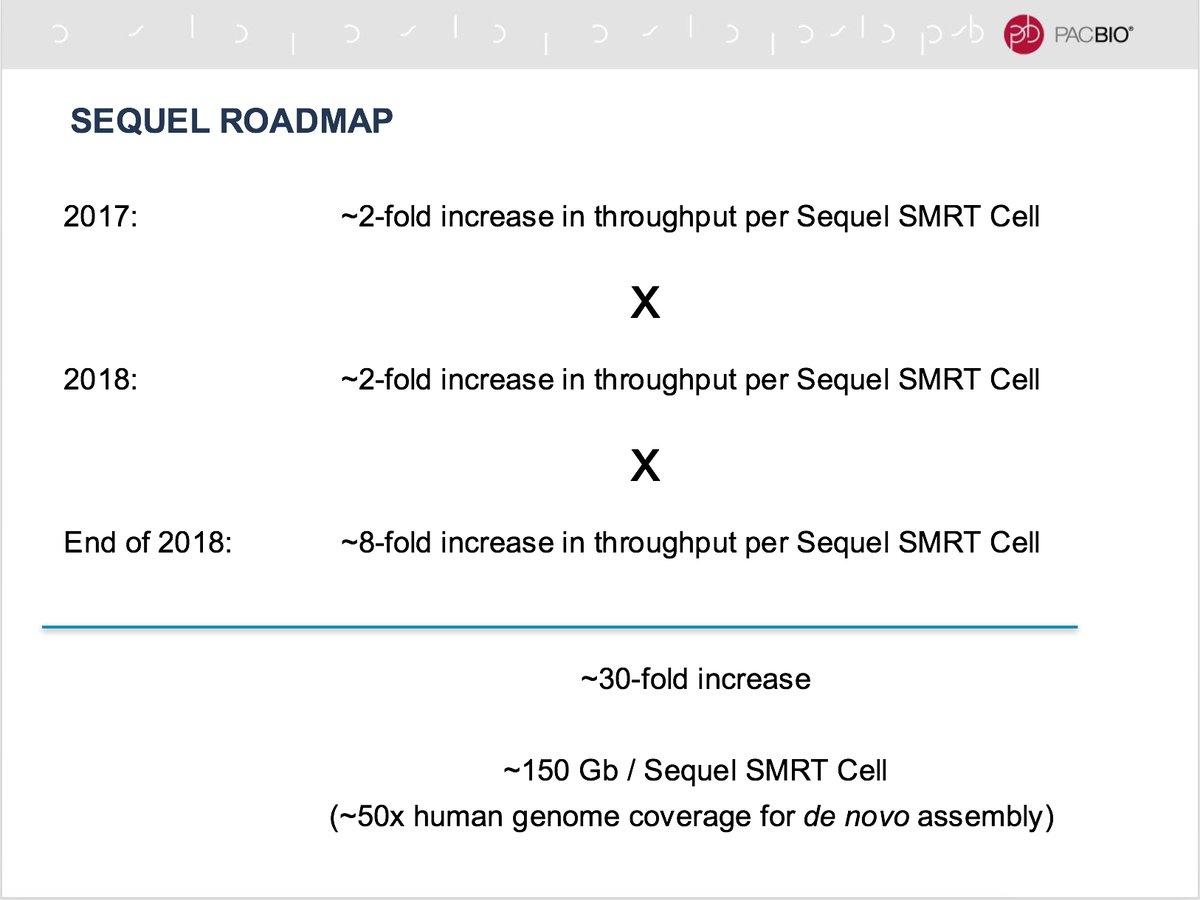

The Sequel roadmap also looks promising -

In the meanwhile, much-hyped Oxford Nanopore continues to fall behind. More on that in the following comment.

3. AGBT and Jared Simpson’s Slides on Nanopore Base Calling

The AGBT conference took place over the last week in Florida. We searched extensively on the conference’s webpage for videos of talks, but could not find even the list of final abstracts. Therefore, the readers may sift through Twitter hashtag #AGBT17 to find out what was going on.

Those interested in Nanopore algorithms may enjoy Jared Simpson’s slides from the conference and his recent paper - Detecting DNA cytosine methylation using nanopore sequencing. Jared is definitely one of the brightest stars in an otherwise fading constellation. Overall, it is clear that the ‘hype versus reality’ gap is increasing rapidly for ONT sequencing. For example, here is a July 2015 prediction for what would happen in the following 12 months from one of the clowns hyping ONT.

- We will see the first full human genome sequenced using only Oxford Nanopore data. The cost will be comparable to current techniques.

- Genotyping and consensus accuracy will be very high, more than capable of accurately calling SNVs (arguably we are there already), and better than other technologies at calling structural variation

- Nanopore will become the default platform for calling base modifications (5mC, 5hmC etc)

- All of the above will be possible without seeing a single A, G, C, or T (i.e. it will all be possible without base-calling the data)

Almost two years later, we have none of the above. Surely a human genome was sequenced (no biggie) at a

great cost, but even the experts are struggling with assembling the reads.

Koren and Phillippy wrote - “nanopore base caller is

underperforming on human, either due to DNA modifications or sequence contexts not seen in the training data”.

Simpson is helping in developing the base caller, but it is still in the research stage, while the company is

rapidly bleeding money. Speaking of ‘nanopore becoming default platform for calling base modifications’, do

not expect it coming in the next five years assuming the company survives. For the last point,

check “Business analysis - Oxford

Nanopore”.

4. Minimizer Delight

Those, who like minimizers (algorithms, not the other kind), will enjoy the following paper.

Improving the performance of minimizers and winnowing schemes

The minimizers scheme is a method for selecting k-mers from sequences. It is used in many bioinformatics software tools to bin comparable sequences or to sample a sequence in a deterministic fashion at approximately regular intervals, in order to reduce memory consumption and processing time. Although very useful, the minimizers selection procedure has undesirable behaviors (e.g., too many k-mers are selected when processing certain sequences). Some of these problems were already known to the authors of the minimizers technique, and the natural lexicographic ordering of k-mers used by minimizers was recognized as their origin. Many software tools using minimizers employ ad hoc variations of the lexicographic order to alleviate those issues. We provide an in-depth analysis of the effect of k-mer ordering on the performance of the minimizers technique. By using small universal hitting sets (a recently defined concept), we show how to significantly improve the performance of minimizers and avoid some of its worse behaviors. Based on these results, we encourage bioinformatics software developers to use an ordering based on a universal hitting set or, if not possible, a randomized ordering, rather than the lexicographic order. This analysis also settles negatively a conjecture (by Schleimer et al.) on the expected density of minimizers in a random sequence.

5. Algorithm for Mapping Long Reads Rapidly

A fast approximate algorithm for mapping long reads to large reference databases

Emerging single-molecule sequencing technologies from Pacific Biosciences and Oxford Nanopore have revived interest in long read mapping algorithms. Alignment-based seed-and-extend methods demonstrate good accuracy, but face limited scalability, while faster alignment-free methods typically trade decreased precision for efficiency. In this paper, we combine a fast approximate read mapping algorithm based on minimizers with a novel MinHash identity estimation technique to achieve both scalability and precision. In contrast to prior methods, we develop a mathematical framework that defines the types of mapping targets we uncover, establish probabilistic estimates of p-value and sensitivity, and demonstrate tolerance for alignment error rates up to 20%. With this framework, our algorithm automatically adapts to different minimum length and identity requirements and provides both positional and identity estimates for each mapping reported. For mapping human PacBio reads to the hg38 reference, our method is 290x faster than BWA-MEM with a lower memory footprint and recall rate of 96%. We further demonstrate the scalability of our method by mapping noisy PacBio reads (each ≥5 kbp in length) to the complete NCBI RefSeq database containing 838 Gbp of sequence and >60,000 genomes.

6. Bioinformaticians’ Foray into Escapism

Escapism is defined as “the avoidance of unpleasant, boring, arduous, scary, or banal aspects of daily life”. If cleaning up of Excel files full of erroneous annotations fits the description, you may like this tool.

Escape Excel: a tool for preventing gene symbol and accession conversion errors

Background: Microsoft Excel automatically converts certain gene symbols, database accessions, and other alphanumeric text and numbers into dates, scientific notation, and other numerical representations, which may lead to subsequent, irreversible corruption of the imported text. A recent survey of popular genomic literature estimates that one-fifth of all papers with supplementary data containing gene lists in Excel format suffer from this issue.

Results: Here, we present an open-source tool, Escape Excel, which prevents these erroneous conversions by generating an escaped text file that can be safely imported into Excel. Escape Excel is available in the Galaxy web environment and can be installed through the Galaxy ToolShed. Escape Excel is also available as a stand-alone, command line Perl script on GitHub (http://www.github.com/pstew/escape_excel). A Galaxy test server implementation is accessible at http://apostl.moffitt.org.

Conclusions: Escape Excel detects and escapes a wide variety of problematic text strings so that they are not erroneously converted into other representations upon importation into Excel. Examples of problematic strings include date-like strings, time-like strings, leading zeroes in front of numbers, and long numeric and alpha-numeric identifiers that should not be automatically converted into scientific notation. It is hoped that greater awareness of these potential data-corruption issues, together with diligent escaping of text files prior to importation into Excel, will help to reduce the amount of Excel-corrupted data in scientific analyses and publications.

7. Recycler: an algorithm for detecting plasmids from de novo assembly graphs

Motivation: Plasmids and other mobile elements are central contributors to microbial evolution and genome innovation. Recently, they have been found to have important roles in antibiotic resistance and in affecting production of metabolites used in industrial and agricultural applications. However, their characterization through deep sequencing remains challenging, in spite of rapid drops in cost and throughput increases for sequencing. Here, we attempt to ameliorate this situation by introducing a new circular element assembly algorithm, leveraging assembly graphs provided by a conventional de novo assembler and alignments of paired-end reads to assemble cyclic sequences likely to be plasmids, phages and other circular elements. Results: We introduce Recycler, the first tool that can extract complete circular contigs from sequence data of isolate microbial genomes, plasmidome and metagenome sequence data. We show that Recycler greatly increases the number of true plasmids recovered relative to other approaches while remaining highly accurate. We demonstrate this trend via simulations of plasmidomes, comparisons of predictions with reference data for isolate samples, and assessments of annotation accuracy on metagenome data. In addition, we provide validation by DNA amplification of 77 plasmids predicted by Recycler from the different sequenced samples in which Recycler showed mean accuracy of 89% across all data types—isolate, microbiome and plasmidome. Availability and Implementation: Recycler is available at http://github.com/Shamir-Lab/Recycler

8. De novo assembly of viral quasispecies using overlap graphs

A viral quasispecies, the ensemble of viral strains populating an infected person, can be highly diverse. For optimal assessment of virulence, pathogenesis and therapy selection, determining the haplotypes of the individual strains can play a key role. As many viruses are subject to high mutation and recombination rates, high-quality reference genomes are often not available at the time of a new disease outbreak. We present SAVAGE, a computational tool for reconstructing individual haplotypes of intra-host virus strains without the need for a high-quality reference genome. SAVAGE makes use of either FM-index based data structures or ad-hoc consensus reference sequence for constructing overlap graphs from patient sample data. In this overlap graph, nodes represent reads and/or contigs, while edges reflect that two reads/contigs, based on sound statistical considerations, represent identical haplotypic sequence. Following an iterative scheme, a new overlap assembly algorithm that is based on the enumeration of statistically well-calibrated groups of reads/contigs then efficiently reconstructs the individual haplotypes from this overlap graph. In benchmark experiments on simulated and on real deep coverage data, SAVAGE drastically outperforms generic de novo assemblers as well as the only specialized de novo viral quasispecies assembler available so far. When run on ad-hoc consensus reference sequence, SAVAGE performs very favorably in comparison with state-of-the-art reference genome guided tools. We also apply SAVAGE on two deep coverage samples of patients infected by the Zika and the hepatitis C virus, respectively, which sheds light on the genetic structures of the respective viral quasispecies.

9. Genome of Deadly Salmonella from the 16th Century

Salmonella enterica genomes recovered from victims of a major 16th century epidemic in Mexico

Indigenous populations of the Americas experienced high mortality rates during the early contact period as a result of infectious diseases, many of which were introduced by Europeans. Most of the pathogenic agents that caused these outbreaks remain unknown. Using a metagenomic tool called MALT to search for traces of ancient pathogen DNA, we were able to identify Salmonella enterica in individuals buried in an early contact era epidemic cemetery at Teposcolula-Yucundaa, Oaxaca in southern Mexico. This cemetery is linked to the 1545-1550 CE epidemic locally known as ‘cocoliztli’, the cause of which has been debated for over a century. Here we present two reconstructed ancient genomes for Salmonella enterica subsp. enterica serovar Paratyphi C, a bacterial cause of enteric fever. We propose that S. Paratyphi C contributed to the population decline during the 1545 cocoliztli outbreak in Mexico.

10. Copyright Battle - US Copyright Laws Do Not Apply in New Zealand

In ‘Sci-hub is Breaking Laws’ - Argues Chemistry Blogger, Who Himself is Breaking Laws !, we

demonstrated the hypocrisy of a law-breaker telling others to follow laws.

Copyright is not a ‘right’, but rather a legal fiction enforced by the guns. When the guns fail, the ‘right’

will also cease to exist. The battle over sci-hub essentially boils down to whether US copyright laws are

enforceable in other countries. In this context, the kim-dotcom story is worth following.

“We have won. We have won the major legal argument. This is the last five years of my life and it’s an embarrassment for New Zealand.”

He said it was effectively a statement from the court that neither he, his co-accused or Megaupload had broken any New Zealand laws.

“Now they’re trying through the back door to say this was a fraud case. I’m confident going with this judgment to the Court of Appeal.

The ruling today has created an unusual bureaucratic contradiction - the warrant which was served on Dotcom when he was arrested on January 20, 2012, stated he was being charged with “copyright” offences.

Likewise, the charges Dotcom will face in the US are founded in an alleged act of criminal copyright violation.

Readers may also enjoy - How Much Should a Poor Scientist Pay to Access Old Research Papers?